An Object Ways Product

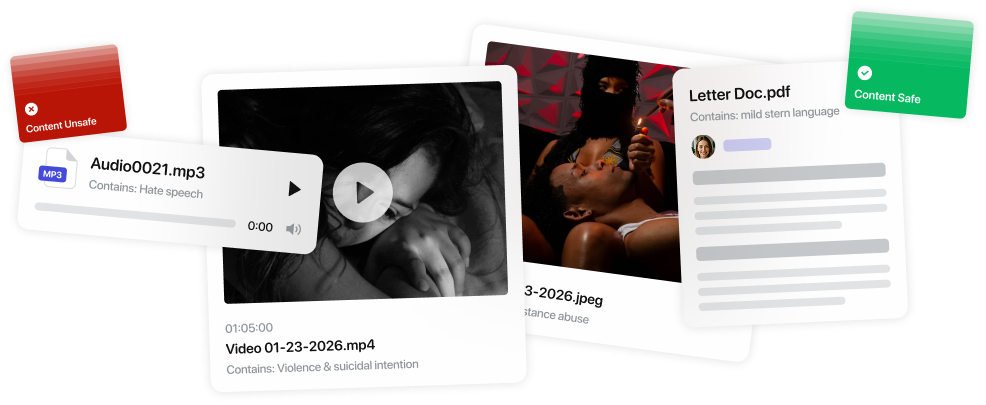

Automated Content Moderation with Accountability

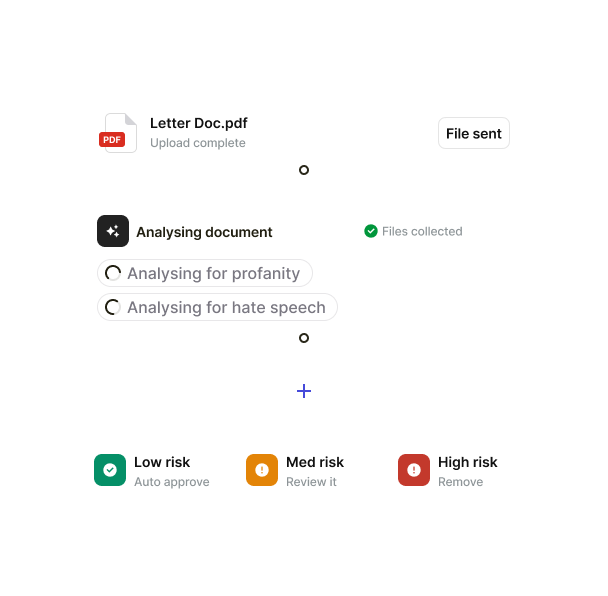

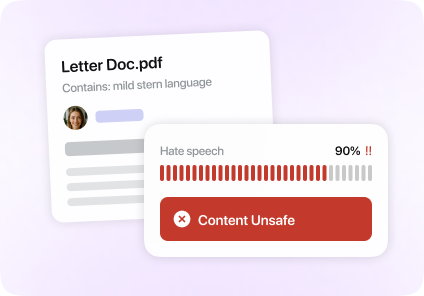

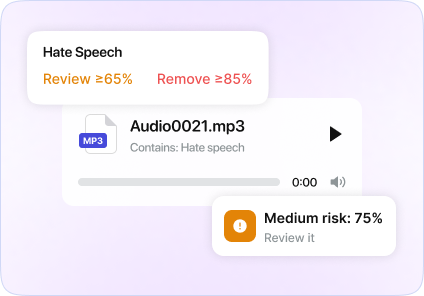

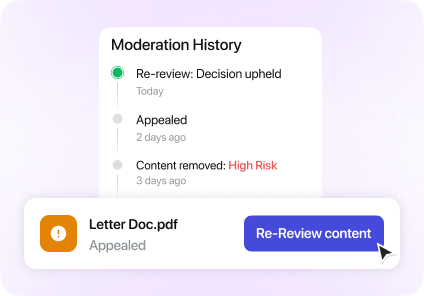

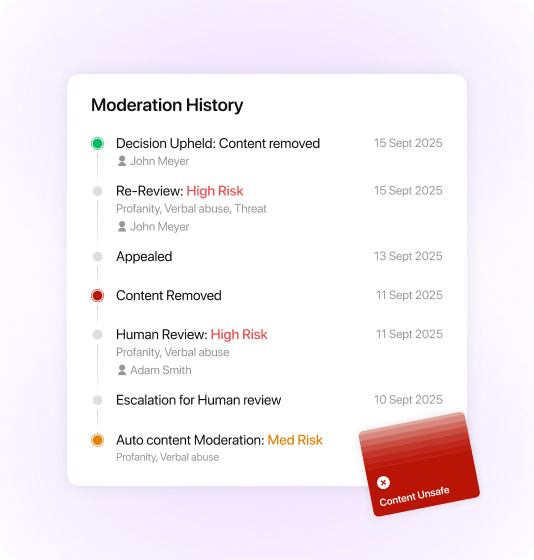

We connect automated content moderation with human oversight into a single, structured system. From initial detection to final decision and quality review, every step of the moderation lifecycle is organized, traceable, and aligned with your content moderation guidelines.