How does Safemod.AI handle content moderation?

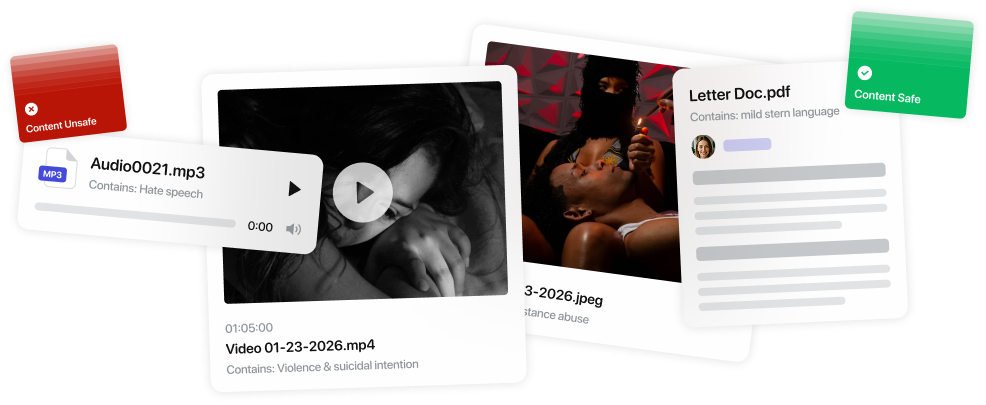

The platform uses AI models to analyze content and assign risk scores, then routes the content through predefined workflows for approval, removal, or human review based on your policies.

When is content escalated for human review?

Content is escalated when it falls into a "gray area"—for example, when it has a moderate risk score and doesn’t clearly meet approval or violation criteria.

How are escalation decisions made?

Escalation paths are determined based on factors like risk level, category severity, region-specific policies, and the platform or use case.

What do human reviewers see during review?

Reviewers are provided with the content, detected risk categories, AI model signals, and relevant policy guidelines to make informed decisions.

Can decisions be appealed or re-reviewed?

Yes. Safemod.AI supports structured appeals and re-review workflows, allowing content to be reassessed while maintaining a complete audit trail.

How does Safemod.AI ensure moderation quality?

The platform includes quality assurance tools such as spot checks, consistency validation, reviewer accuracy tracking, and policy refinement mechanisms.

Does Safemod.AI provide transparency in moderation?

Yes. It offers full visibility into the moderation lifecycle, including detection, decisions, escalation paths, and review history—all in a centralized interface.

Can workflows be customized?

Absolutely. Moderation workflows can be adjusted based on evolving policies and requirements without disrupting ongoing operations.

How does Safemod.AI help scale moderation?

By combining automation with structured human oversight, Safemod.AI enables teams to handle large volumes of content efficiently while maintaining accuracy and accountability.

This is just to show how it will be when it is closed